Supported Models

Well-supported LLMs

Fine-tuning is currently available for the following models:

| Model Name | Parameters | Architecture | License | LoRA Adapter | Turbo LoRA Adapter ⚡ (New) | Context Window | Supported Fine-Tuning Context Window |

|---|---|---|---|---|---|---|---|

| solar-1-mini-chat-240612 | 10.7 billion | Solar | Custom License | ✅ | ✅ | 32768 | 32768 |

| solar-pro-preview-instruct | 22.1 billion | Solar | Custom License | ✅ | ❌ | 4096 | 4096 |

| mistral-7b | 7 billion | Mistral | Apache 2.0 | ✅ | ✅ | 32768 | 32768 |

| mistral-7b-instruct | 7 billion | Mistral | Apache 2.0 | ✅ | ✅ | 32768 | 32768 |

| mistral-7b-instruct-v0-2 | 7 billion | Mistral | Apache 2.0 | ✅ | ✅ | 32768 | 32768 |

| mistral-7b-instruct-v0-3 | 7 billion | Mistral | Apache 2.0 | ✅ | ✅ | 32768 | 32768 |

| mistral-nemo-12b-2407 | 12 billion | Mistral | Apache 2.0 | ✅ | ❌ | 131072 | 32768 |

| mistral-nemo-12b-instruct-2407 | 12 billion | Mistral | Apache 2.0 | ✅ | ❌ | 131072 | 32768 |

| zephyr-7b-beta | 7 billion | Mistral | MIT | ✅ | ✅ | 32768 | 32768 |

| llama-3-1-8b | 8 billion | Llama-3 | Meta (request for commercial use) | ✅ | ❌ | 62999 | 32768 |

| llama-3-1-8b-instruct | 8 billion | Llama-3 | Meta (request for commercial use) | ✅ | ❌ | 62999 | 32768 |

| llama-3-8b | 8 billion | Llama-3 | Meta (request for commercial use) | ✅ | ❌ | 8192 | 8192 |

| llama-3-8b-instruct | 8 billion | Llama-3 | Meta (request for commercial use) | ✅ | ❌ | 8192 | 8192 |

| llama-3-70b | 70 billion | Llama-3 | Meta (request for commercial use) | ✅ | ❌ | 8192 | 8192 |

| llama-3-70b-instruct | 70 billion | Llama-3 | Meta (request for commercial use) | ✅ | ❌ | 8192 | 8192 |

| llama-2-7b | 7 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| llama-2-7b-chat | 7 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| llama-2-13b | 13 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| llama-2-13b-chat | 13 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| llama-2-70b | 70 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| llama-2-70b-chat | 70 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| codellama-7b | 7 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| codellama-7b-instruct | 7 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| codellama-13b-instruct | 13 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 16384 | 16384 |

| codellama-70b-instruct | 70 billion | Llama-2 | Meta (request for commercial use) | ✅ | ❌ | 4096 | 4096 |

| mixtral-8x7b-instruct-v0-1 | 46.7 billion | Mixtral | Apache 2.0 | ✅ | ❌ | 32768 | 7168 |

| phi-2 | 2.7 billion | Phi-2 | Microsoft | ✅ | ❌ | 2048 | 2048 |

| phi-3-mini-4k-instruct | 3.8 billion | Phi-3 | Microsoft | ✅ | ❌ | 4096 | 4096 |

| phi-3-5-mini-instruct | 3.8 billion | Phi-3 | Microsoft | ✅ | ❌ | 131072 | 16384 |

| gemma-2b | 2.5 billion | Gemma | ✅ | ❌ | 8192 | 8192 | |

| gemma-2b-instruct | 2.5 billion | Gemma | ✅ | ❌ | 8192 | 8192 | |

| gemma-7b | 8.5 billion | Gemma | ✅ | ❌ | 8192 | 8192 | |

| gemma-7b-instruct | 8.5 billion | Gemma | ✅ | ❌ | 8192 | 8192 | |

| gemma-2-9b | 9.24 billion | Gemma | ✅ | ❌ | 8192 | 8192 | |

| gemma-2-9b-instruct | 9.24 billion | Gemma | ✅ | ❌ | 8192 | 8192 | |

| gemma-2-27b | 27.2 billion | Gemma | ✅ | ❌ | 8192 | 4096 | |

| gemma-2-27b-instruct | 27.2 billion | Gemma | ✅ | ❌ | 8192 | 4096 | |

| qwen2-1-5b | 1.54 billion | Qwen | Tongyi Qianwen | ✅ | ❌ | 131072 | 32768 |

| qwen2-1-5b-instruct | 1.54 billion | Qwen | Tongyi Qianwen | ✅ | ❌ | 131072 | 32768 |

| qwen2-7b | 7.62 billion | Qwen | Tongyi Qianwen | ✅ | ❌ | 131072 | 32768 |

| qwen2-7b-instruct | 7.62 billion | Qwen | Tongyi Qianwen | ✅ | ❌ | 131072 | 32768 |

| qwen2-72b | 72.7 billion | Qwen | Tongyi Qianwen | ✅ | ❌ | 131072 | 8192 |

| qwen2-72b-instruct | 72.7 billion | Qwen | Tongyi Qianwen | ✅ | ❌ | 131072 | 8192 |

Many of the latest OSS models are released in two variants:

- Base model (llama-2-7b, etc): These are models that are primarily trained on the objective of text completion.

- Instruction-Tuned (llama-2-7b-chat, mistral-7b-instruct, etc): These are models that have been further trained on (instruction, output) pairs in order to better respond to human instruction-styled inputs. The instructions effectively constrains the model’s output to align with the response characteristics or domain knowledge.

Read more about the different types of LoRA adapters here.

Best-Effort LLMs (via HuggingFace)

Best-effort fine-tuning is also offered for any Huggingface LLM meeting the following criteria:

- Has the "Text Generation" and "Transformer" tags

- Does not have a "custom_code" tag

- Are not post-quantized (ex. model containing a quantization method such as "AWQ" in the name)

- Has text inputs and outputs

"Best-effort" means we will try to support these models but it is not guaranteed.

Fine-tuning a custom LLM

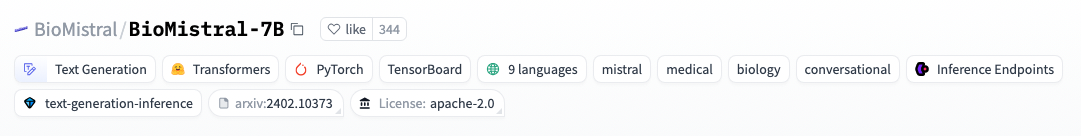

- Get the Huggingface ID for your model by clicking the the copy icon on the custom base model's page, ex. "BioMistral/BioMistral-7B".

- Pass the Huggingface ID as the

base_model.

# Create an adapter repository

repo = pb.repos.create(name="bio-summarizer", description="Bio News Summarizer", exists_ok=True)

# Start a fine-tuning job, blocks until training is finished

adapter = pb.adapters.create(

config=FinetuningConfig(

base_model="BioMistral/BioMistral-7B"

),

dataset="bio-dataset",

repo=repo,

description="initial model with defaults"

)

private serverless deployment needed for inference

Note that if you fine-tune a custom model not on our shared deployments list, you'll need to deploy the custom base model as a private serverless deployment in order to run inference on your newly trained adapter.